The Power of Open Source Software

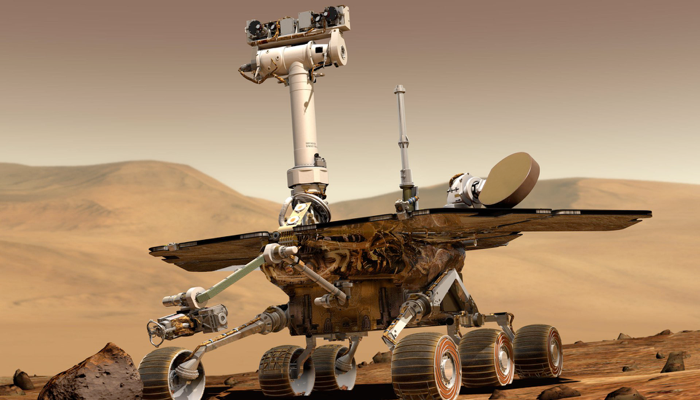

Two decades ago, a piece of open source software I wrote for a Canadian HPC project caught the attention of someone working for NASA JPL, and after a few emails and a call regarding our experience applying it, a modified version of it was used in a small way to help the Mars Exploration Rover (MER) mission involving the rovers Spirit and Opportunity.

With last week’s announcement that the MER mission is officially over, it’s nice to know that a piece of software I wrote helped, even if it was in a small way - it made me smile over coffee that morning, and it’s made me smile several times since then!

Essentially, what I was working on in the very early 2000s was the implementation of two MPICH (beowulf) clusters for SHARCNET after being introduced to them by Compaq. Since Compaq bought DEC, and the early version of the SHARCNET cluster was comprised of DEC/Compaq Alphas interconnected using a Quadrics switch. One was at UWO and called Great White (running Linux). The other was at McMaster and called Deep Purple (running Tru64 UNIX).

The problem they were having at the time involved optimizing the jobs that ran on the cluster (one astronomer at McMaster generated GB of data an hour refining images from an Xray telescope).

This involved a lot of Alpha-platform-specific tuning of MPICH, but it wasn’t enough. What the software needed to get better at doing was predict when a particular node would “likely” be finished computing its part of the job, as well as ensure we knew which nodes would be computing which parts of the job itself. And this got a lot harder the more nodes were added (anything above 128 nodes was like predicting the weather in 2 weeks).

After a lot of failed brainstorming attempts, I ended up capturing data from the jobs using tokens that were inserted into each job, and analyzing the results using regression analysis.

What I found after a few weeks of mining the data in SPSS is that I could approximate which node would compute a piece of data, and how long it would take based on 4 different factors that were not directly related to “how” things got executed at all.

With a small calculation done before the instructions were spread out with MPICH using C, it turned out to be accurate 99.92% of the time, and the other 0.08% of the time it could be stored, re-executed, and slotted into the results quite quickly.

Basically, it was a crude shortcut that worked. Performance of jobs (that chewed up a lot of grant money per hour of cluster time) increased about 600-800%, which meant you got 6-8 times as much stuff done with the same time on the cluster, depending on your workload. The only problem was that it was accurate on that cluster configuration and platform (DEC Alpha), but would need to be radically changed for other systems.

Since there was no GitHub, etc. back in those days, we posted open source software on our university and project FTP servers (others used SourceForge, but that was frowned upon in the academic space back then).

And academia basically loved to peruse the code on other university sites regularly (people made Web indexes galore to make it easier to find code anywhere at any university).

NASA and many other organizations also perused these code bases, and it wasn’t uncommon to call/email the authors of software when you had questions back then (especially since we listed our phone and extension and email address in the software READMEs, etc.).

So I got a call from a few people about it. One was from Virginia Tech, who were implementing a PPC cluster. The other was from someone at NASA JPL who was mainly interested in how I came up with the solution and how it could be modified to suit his use-case (which was simplifying input very quickly from camera/sensors on the MER rovers).

We had some nice emails and a very long phone discussion, and in the end they ended up modifying my software to suit their needs! I even got a nice thank-you a few months later when everything was done (i.e. code freeze) for my help and a link to his modifications (which were actually brilliant - NASA has some very talented developers).

The whole purpose of open source software is to keep software evolving through human collaboration without being impeded by things that say “no, you can’t share that because X person/company owns it and they wouldn’t approve.” It feels good to write open source software because you feel like you are contributing to the world. And it feels even better when you write some open source software that contributes to mars ;-)