The Power of RISC architecture

This part of the 1995 Hackers movie shown above is right on par for the time. After all, by the mid 1990s Reduced Instruction Set Computer (RISC) processors were seen as the future of computing by most in the tech industry.

By using a simplified instruction set, computers with RISC processors at the time could perform tasks in a quarter of the time compared to computers that had a traditional Complex Instruction Set Computer (CISC) processor. RISC processors also required less circuitry, which allowed manufacturers to easily add more functionality, such as crypto accelerators, memory management controllers and specialized floating point arithmetic units.

Most PCs and Macs of the early 1990s used Intel or Motorola CISC processors. And while RISC processors were available at the time, they were only used in very expensive high-end UNIX workstations. Apple used PowerPC RISC processors in their Macs during the late 1990s, but switched entirely to the Intel CISC processors that dominated the market by the mid 2000s. In fact, by the late 2000s, most RISC processor platforms had died off - the only exception being the Acorn RISC Machine (ARM) used in nearly all low-power device and mobile markets. For example, your smartphone, Google Home, and car navigation system all use ARM processors.

There was no technical barrier to producing a more powerful ARM processor for high-end computing - it just wasn’t done until the late 2010s. Amazon’s Graviton server processor and Apple’s M1 desktop processor are two examples of doing just that, and both illustrate the power of RISC architecture.

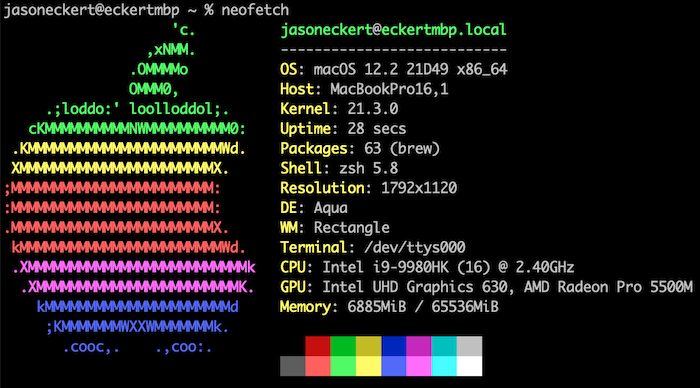

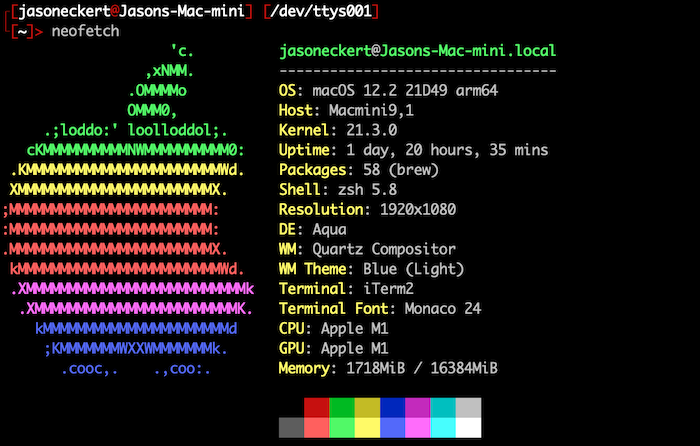

Let’s compare two different Mac computers running the same version of macOS. One has an Intel Core i9 CISC processor and 64GB of memory, while the other has the Apple M1 RISC processor and 16GB of memory. Following is output of neofetch for each one (the M1 system uses an oh-my-zsh theme for the command prompt):

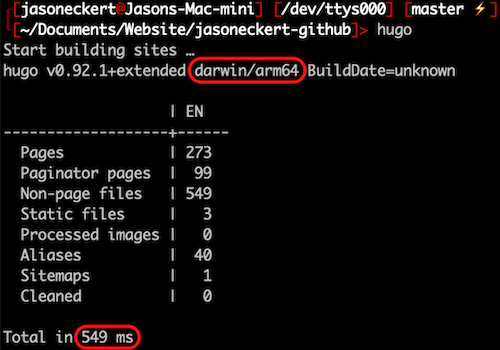

This HTML website is generated from source files in Markdown format using the hugo compositor, and I sync my website files across all of my computers. This means that I can composite my website on both the Intel Core i9 CISC processor and Apple M1 RISC processor using the same version of macOS and hugo:

The Intel Core i9 took 6126 milliseconds (6 seconds) to generate all 273 pages of this website, while the Apple M1 only took 549 milliseconds (half a second). The Apple M1 was over 11 times faster!

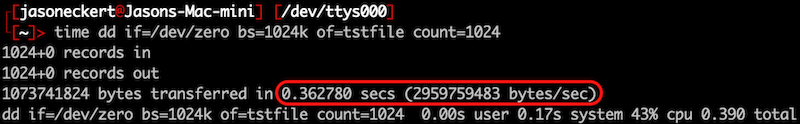

While both systems use solid state storage that is soldered to the motherboard, a difference in the speed of this storage between systems could explain the hugo results. So I ran the time command to measure the time it takes to create a 1073 MB file (called tstfile) using the dd command on each system:

As you can see, the results were nearly identical (0.36 seconds to create the same file), so storage speed didn’t contribute to the results.

Another hardware feature that could explain the hugo results is memory speed. The Intel Core i9 uses 64GB of 2666 MHz DDR4 memory soldered to the motherboard near the processor, but the Apple M1 uses 16GB of 4266 MHz low power LP-DDR4X memory soldered directly next to the processor. While LP-DDR4X uses less power, it also has reduced memory bandwidth compared to DDR4. Thus, the higher speed of the 4266 MHz LP-DDR4X in the Apple M1 is likely on par (or a bit faster) than the 2666 MHz DDR4 in the Intel Core i9. Being closer to the processor likely also gives the LP-DDR4X a small performance boost as well.

All of this aside, differences in memory speed can’t fully explain the difference we saw with the hugo results since hugo isn’t a complex/graphical app. Instead, hugo primarily relies on processor calculations for generating website content from Markdown files. Thus, most of the difference in hugo speed between the Intel Core i9 and Apple M1 is likely due to the difference between RISC and CISC architecture.

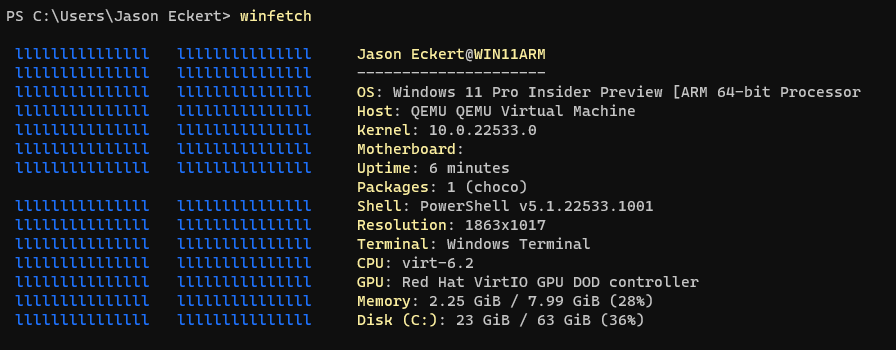

Now, let’s see if Windows 11 shows the same difference between ARM RISC and Intel CISC using hugo. I installed Windows 11 Professional for ARM within a virtual machine (8GB of memory) using the Universal Turing Machine (UTM) app on the Apple M1. UTM leverages the native hypervisor framework in macOS alongside the open source Qemu framework to achieve near-native performance for ARM-based operating systems running within virtual machines. Following is the winfetch (Windows equivalent of neofetch) output from a PowerShell window in that virtual machine:

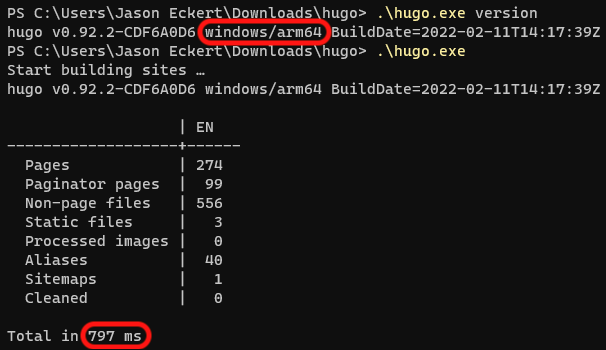

Next, I installed the latest ARM version of hugo for Windows and composited the current version of my website after roughing in the first part of this blog post (which increased the size to 274 pages):

The virtual machine running Windows 11 for ARM on the Apple M1 took 797 milliseconds (0.8 seconds) to generate the 274 pages of this website, which is slower than the 549 milliseconds needed to generate 273 pages earlier on the native macOS operating system. At least some of this difference can be explained by the performance cost of running hugo within a virtual machine.

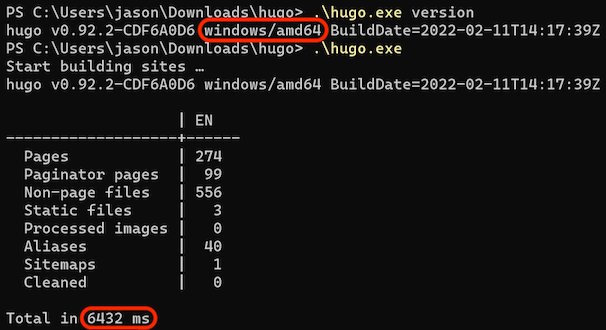

Next, I installed the latest version of hugo for Windows on my Thinkpad (Intel Core i7 with Samsung PM981a NVMe SSD and 32GB of 2400 MHz DDR4 memory running Windows 11 Professional) and composited the same version of my website:

The Core i7 system running Windows 11 took 6432 milliseconds (6.4 seconds) to generate the 274 pages of this website. As expected, this is a bit slower than the 6126 milliseconds it took the more powerful Core i9 running macOS to generate the 273 pages earlier.

However, Windows 11 running within a virtual machine on the Apple M1 RISC system still composited this website 8 times faster than Windows 11 installed natively on the Intel Core i7 CISC system, and with only a quarter of the memory. Once again, this disparity can only be explained by the difference between RISC and CISC architecture.

Looks like Kate and Dade from Hackers were correct. Go figure.